AI Isn’t the Story — People Are: What 75 Real Experiences Reveal

EuroSense is a citizen science platform born within Volt Europa. It collects real stories from real people across Europe to feed genuine lived experience into democratic and policy conversations. We do this using participatory sensemaking tools and practices. We don't ask yes/no questions. We ask people to share a real story and then interpret it themselves.

We recently reviewed a collection of stories shared on Eurosense that are related to everyday experiences with AI. Out of a total of 1100 stories collected at that moment, 75 were identified as related to the topic of AI and digital life.

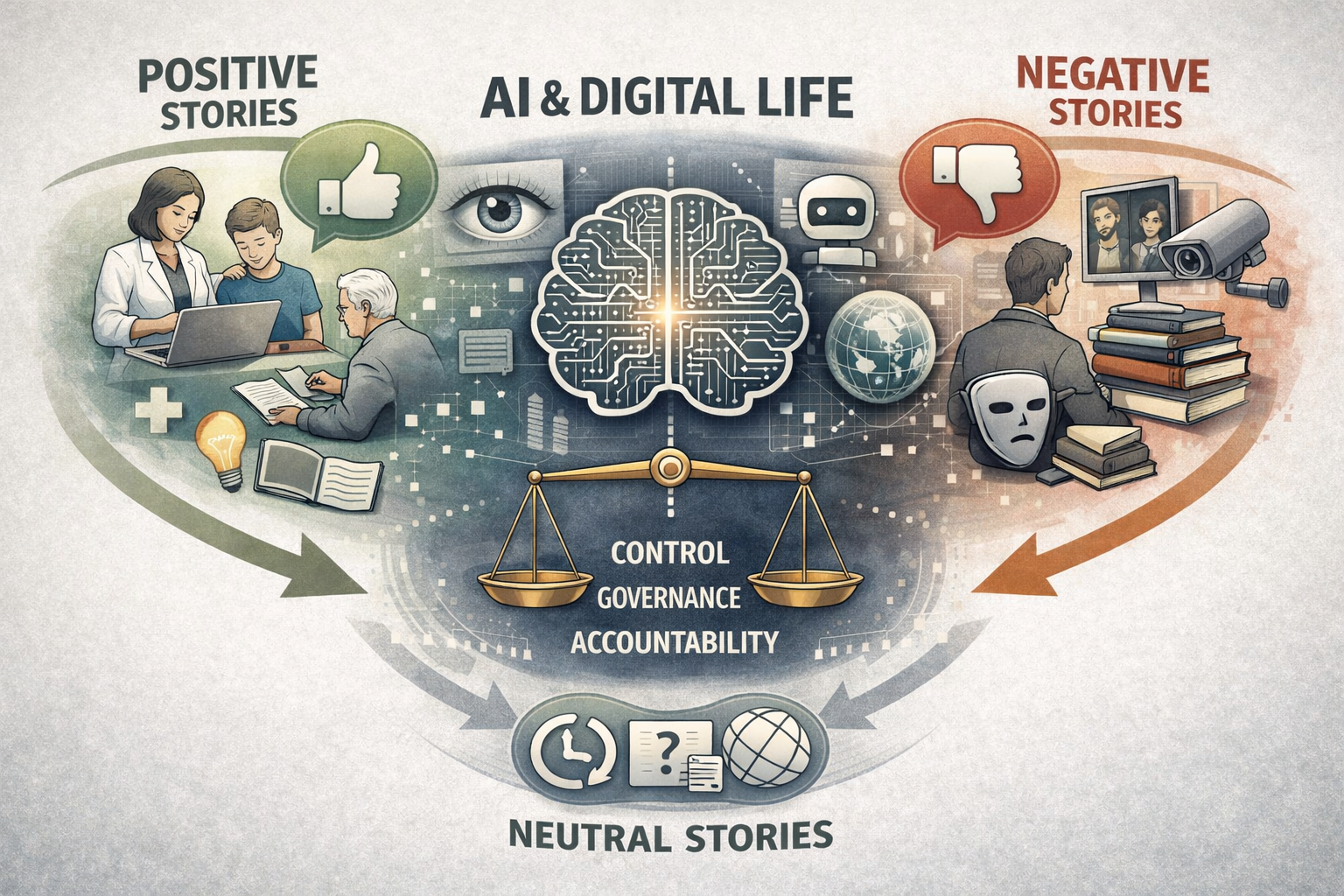

Here are some insights that we were able to get out of the 75 stories. As you may know, our story collector asks participants to label their stories as positive, negative, or neutral, and the following review examines the common patterns that emerge within stories assigned to each of these categories.

The positive stories were almost never about the technology itself. They were about agency:

A medical intern using ChatGPT to catch a rare diagnosis.

A caregiver summarising complex research for her team.

A student finally finding the words for something difficult.

What made these experiences positive wasn't the AI itself but the person remaining clearly in control, with a real outcome that mattered to them.

The negative stories told a different story. Not really about AI either but about the absence of accountability around it:

Deepfakes eroding trust in what we see.

Chatbots replacing human contact in healthcare.

Books published under fake authors.

People feeling silenced, replaced, or surveilled without recourse.

The frustration wasn't with the tool. It was with the gap between those making decisions and those living with the consequences.

A smaller number of stories were labeled neutral. They indeed don’t present a clear good or bad outcome, but rather explore ambiguity and trade-offs, such as:

AI helping productivity but creating dependence.

Questions about authorship when AI generates content.

Broader reflections on how digital media shapes knowledge and truth.

The sharpest finding is this: the same technology generates both the most hopeful and the most distressing experiences in our dataset. The pattern is unambiguous: this isn't a debate about the technology itself. It's a debate about governance, accountability, and who stays in control.

This is the power of participatory narrative inquiry at scale. Instead of asking Europeans what they think about AI, EuroSense asks them to share what they have lived — and then interpret it themselves. The result is richer, more honest, and more actionable than any poll.

This is exactly what EuroSense is built to surface: not abstract opinion, but lived texture. The kind of insight that doesn't show up in a survey, but shapes how people trust institutions, engage in democracy, and see their own future.

If you work in policy, tech governance, or civic engagement — our tool and these stories are for you! Get in touch to find out more.

We keep collecting stories on AI related topics, please do contribute if you feel like sharing your experience on the following link:

To contribute stories on any other topic not related to AI please follow this link:

#EuroSense #CitizenScience #Democracy #AIGovernance #CollectiveIntelligence #ParticipatorySensemaking #VoltEuropa #DigitalDemocracy